Combing VHR Satellite Imagery and Deep Learning to Detect Landfills

- Skye Boag, Marketing Manager at European Space Imaging

Satellite imagery and remote sensing has been used extensively for monitoring land usage and land cover. With increasing availability of Very High Resolution (VHR) satellite imagery captured within shorter revisit times, it has become possible to detect landfills both in terms of size and the types of waste being dumped. This has become increasingly important in the detection and monitoring of illegal waste dumps that have surfaced across the world. In order to prevent environmental disasters, it is crucial that these illegal landfills are efficiently and accurately identified. To solve the slow nature of manual landfill detection, Researcher MSc. Anupama Rajkumar, SZTAKI, investigated whether VHR satellite imagery provided by European Space Imaging could be combined with advanced deep learning techniques to automate the detection of waste landfills.

AN ENVIRONMENTAL CONCERN

Landfills are sites designated for dumping rubbish, garbage or other sorts of solid wastes and are usually designated by the municipalities where the garbage is dumped with sufficient safeguards in place to contain pollution. Unfortunately, over time, multiple locations of illegal garbage dumps have spread globally. Governments and regulatory bodies face many challenges when it comes to detecting these sites and management of these illegal landfills tends to be a tedious task that demands considerable resources with a low level of confidence. Currently, governments rely heavily on tip-offs, an unreliable and inefficient method. Furthermore, detection via video cameras and UAVs is both time-consuming and expensive, and therefore governments are looking for innovative solutions to combat this worldwide issue. Prior to the use of remote sensing and advanced deep learning techniques, methods to detect landfills were based on visual identification, followed by classification methods to identify and map illegal landfills.

DATA PREPARATION

Data forms an integral part of all machine learning pipelines. For any machine learning algorithm to extract features or learn mapping from input variables, it must first be trained with high-quality data. The volume of data required depends on the feature to be extracted, the complexity of the model being trained and the number of parameters that need fine-tuning. The collection of the data used by a machine learning model is called the dataset. To create the dataset that was used for the deep learning models for semantic segmentation to detect landfills, a series of landfill sites across Europe captured with GeoEye-1, WorldView-2 and WorldView-3 were selected.

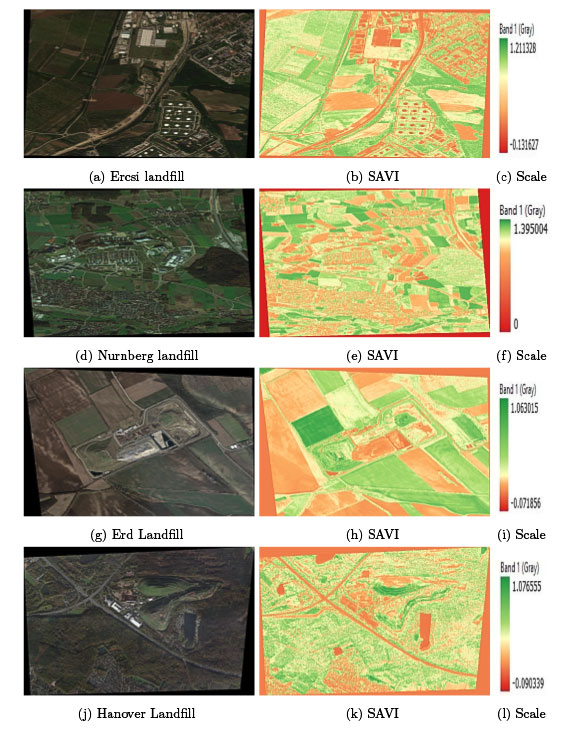

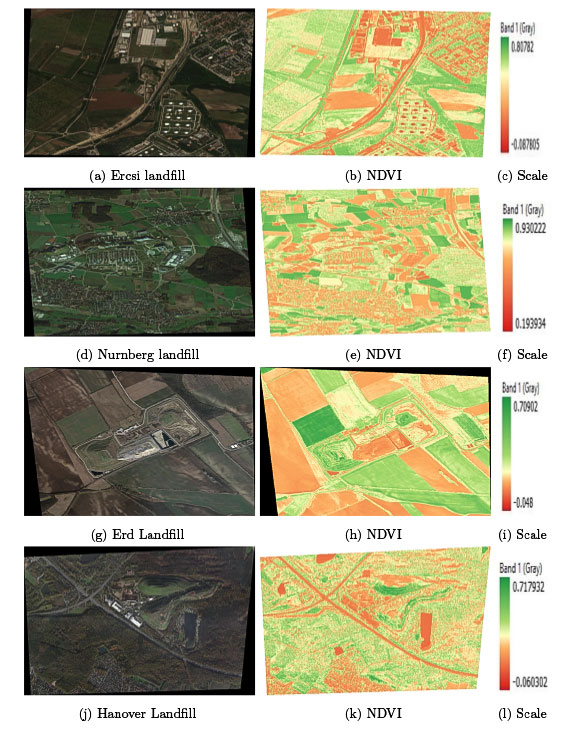

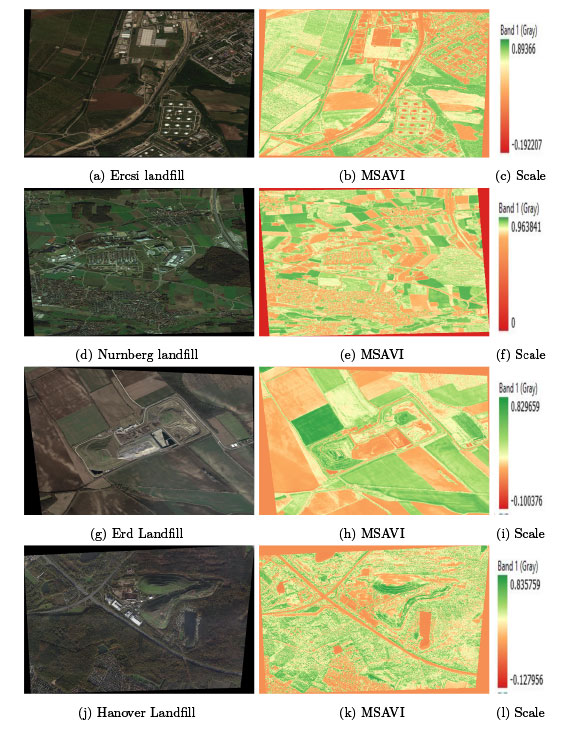

USING MULTISPECTRAL VHR IMAGERY TO CREATE VEGETATION INDICES (VI’S)

The WorldView constellation from Maxar Technologies consists of multiple satellites equipped with multispectral bands that can be used to capture an image of earth with spectral signatures to provide information that the naked human eye cannot see. From this data, it is possible to create different Vegetation indices (VI’s) to gain insight into useful information regarding the extent and quality of vegetation cover surrounding and occupying landfills. However, a mixed combination of soil, weeds and plants of interest makes the calculation of simple VI a very difficult task. The types of VI’s that were a vital component of this project included Normalized Difference Vegetation Index (NDVI), Soil Adjusted Vegetation Index (SAVI) and Moisture Soil Adjusted Vegetation Index (MSAVI). SAVI is used to correct NDVI for the influence of soil brightness in areas where vegetative cover is low and MSAVI is used in areas where NDVI provides invalid data due to the area being semi-arid or arid. These calculations provided significant additional information for the dataset of the tested deep learning models. It assisted the models to differentiate between landfill and vegetation allowing for greater accuracy in predicting a landfill and estimating its size.

SCENE CLASSIFICATION

Scene classification is the procedure of determining the category that the pixels of an image belong to, in essence it correctly labels satellite imagery with predefined semantic categories. It can be divided into three categories; pixel-level, object level and scene-level. Pixel-level remote sensing image classification is still an active research topic in the areas of multispectral and hyperspectral remote sensing image analysis whilst object-level and scene-level image classification is gaining popularity as the availability of VHR satellite imagery becomes available. Object-level analysis aims at recognising objects in the images while scene-level remote sensing aims to classify image patches into a semantic class. Scene classification is an active field of research. It is a non-trivial and challenging problem due to the variance and spatial distributions of ground objects existing in the scenes.

THE DEEP DIVE on DEEP LEARNING

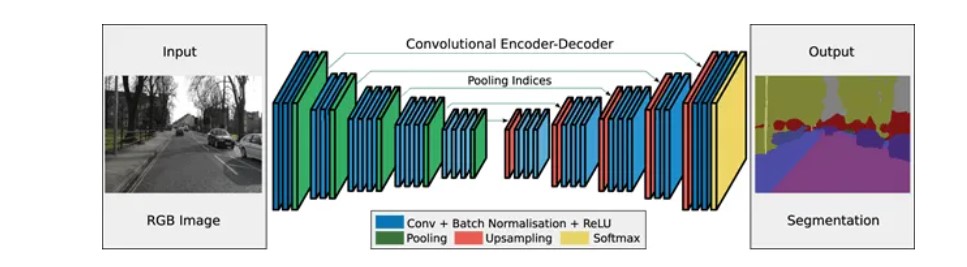

Deep learning can be considered as a subset of machine learning. It is a field that is based on learning and improving on its own by examining computer algorithms. Each neural network consists of a layer of nodes within individual layers that are connected to adjacent layers. The network is said to be deeper based on the number of layers it has. As such, these systems require large amounts of data to return accurate results. Due to the limited availability of labelled datasets, training a deep learning model from scratch can be a challenging task leading to unsatisfactory results. This can be remedied by the use of techniques like transfer learning and fine-tuning a pre-trained model. For the purpose of this study, deep learning models based on fully convolutional networks (FCN’s) and UNet for semantic segmentation were tested. In semantic segmentation, labels are assigned to each pixel in an image and pixels belonging to the same object in an image are clustered together.

The increasing demand for accurate and efficient identification and classification of objects has been met by the rise of several deep learning approaches for image segmentation or scene understanding and has become the most preferred method when it comes to remote-sensing image analysis. In remote sensing, deep learning has been used for image analysis techniques like image fusion, image registration, scene classification, change detection, segmentation, LULC and OBIA. Prior to the popularity of deep neural networks, neural networks that form the basis of deep learning were used by the remote sensing community for classification and detection task.

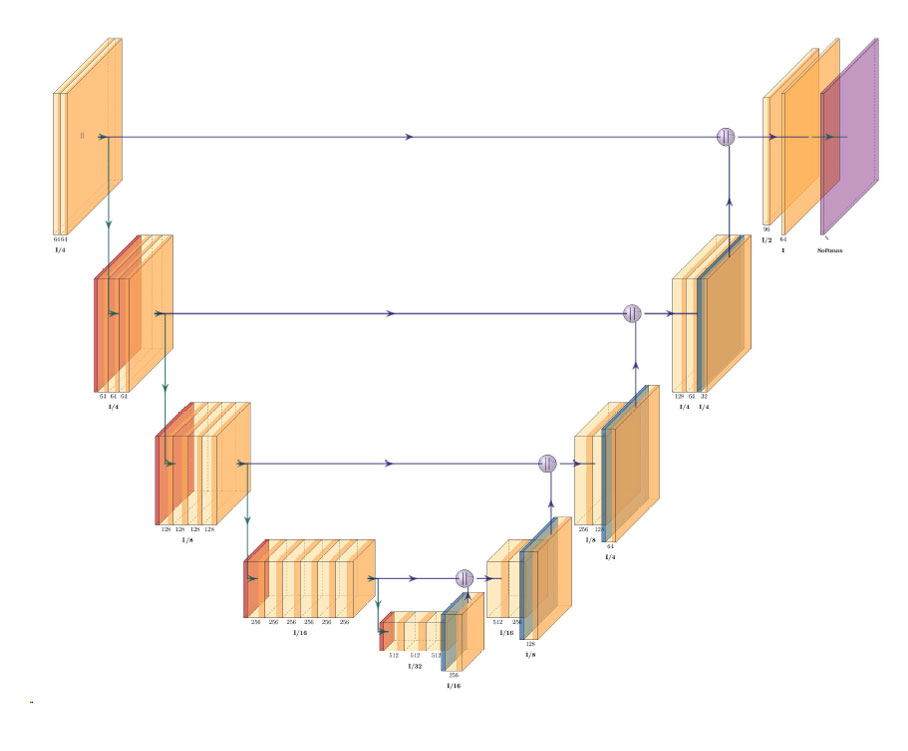

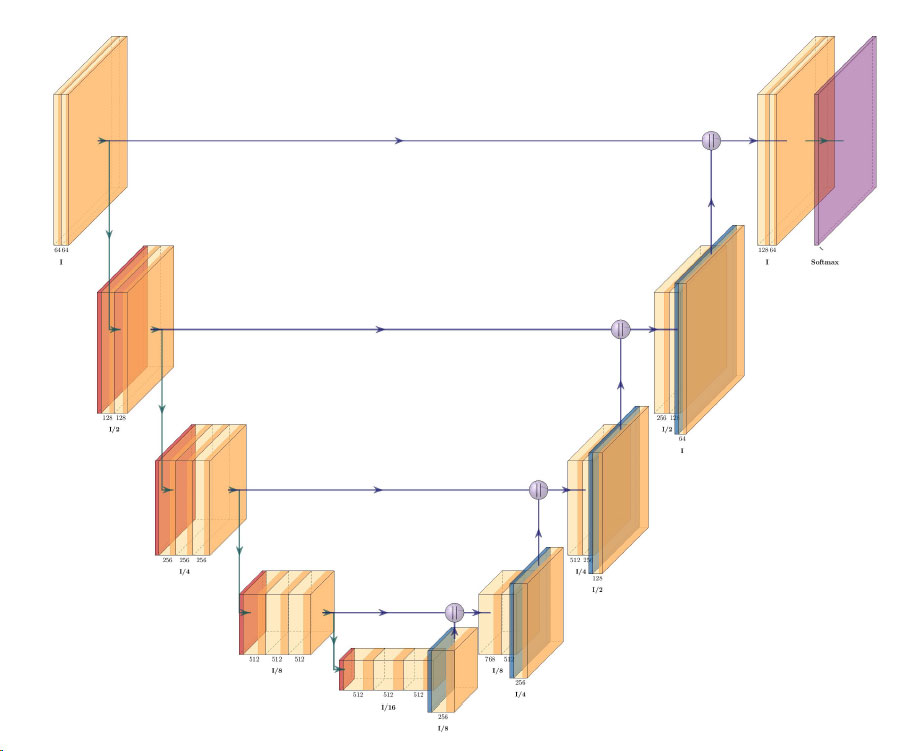

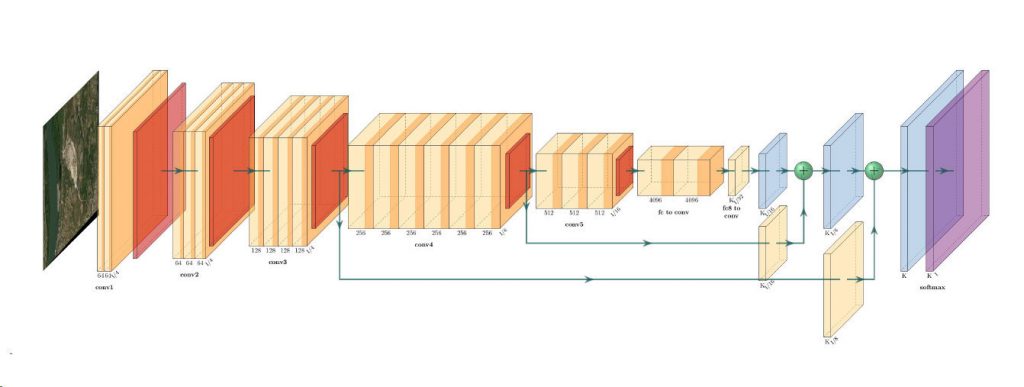

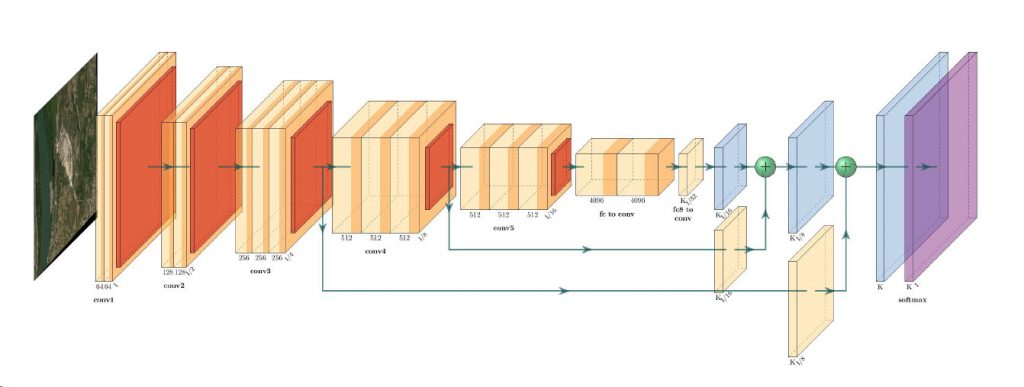

The basic architecture (FCN) in image segmentation consists of an encoder and a decoder. The encoder extracts features from the image through filters. The decoder is responsible for generating the final output. Source: https://neptune.ai/blog/image-segmentation-in-2020

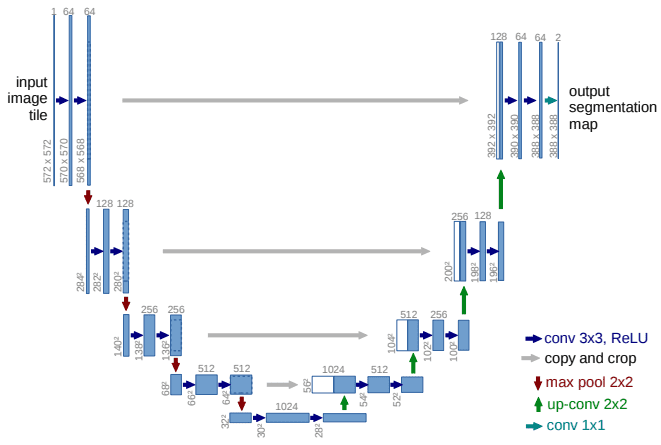

The basic architecture (UNet) showing symmetrical contracting and expanding path on left/ride side respectively.

Source: https://theaisummer.com/unet-architectures/

PUTTING IT ALL TOGETHER

For the purpose of this study, the focus was on the automatic detection of the landfill regardless of the waste type. After implementing several deep learning architectures for semantic segmentation and analysing the performance metrics and prediction results from each, it can be concluded that landfills can successfully be detected from VHR multispectral imagery with an accuracy of approximately 90%. In the future, creating datasets with specific waste materials such as plastic or glass should be considered as this would lead to an improvement in prediction accuracy. Whilst only convolutional neural networks, such as FCN’s, were explored, future studies should test attention-based models such as RNN’s and Visual Transformers (ViT) to explore their compatibility with remote sensing data to extract meaningful information. These deep learning models have rarely been used with remote sensing data and therefore testing of these architectures and studying their performance could provide further meaningful insights for detecting landfills remotely.

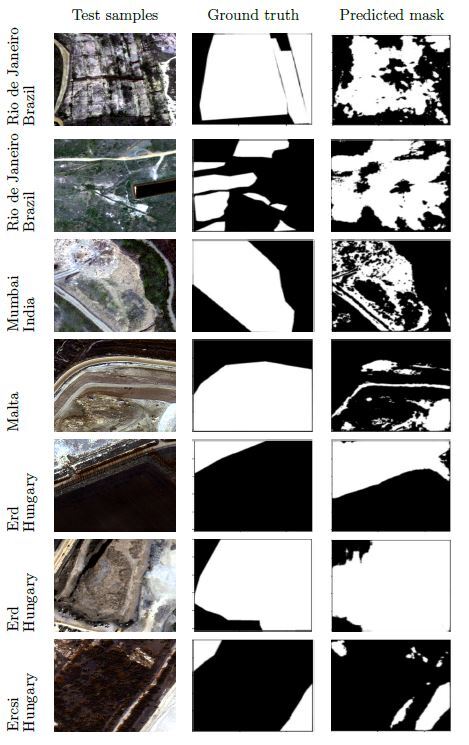

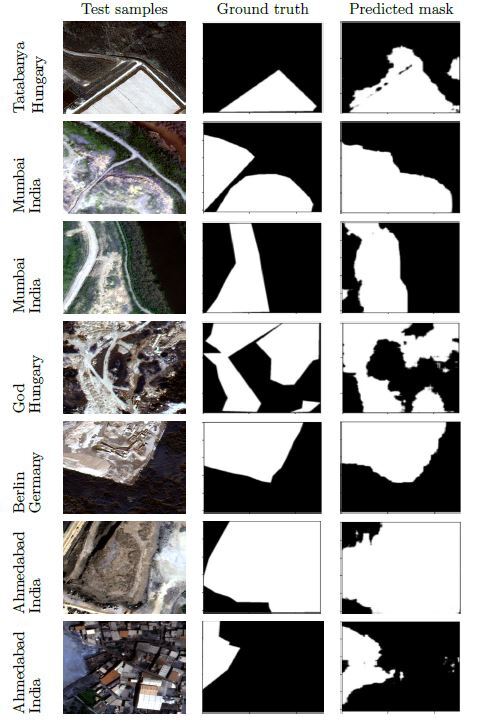

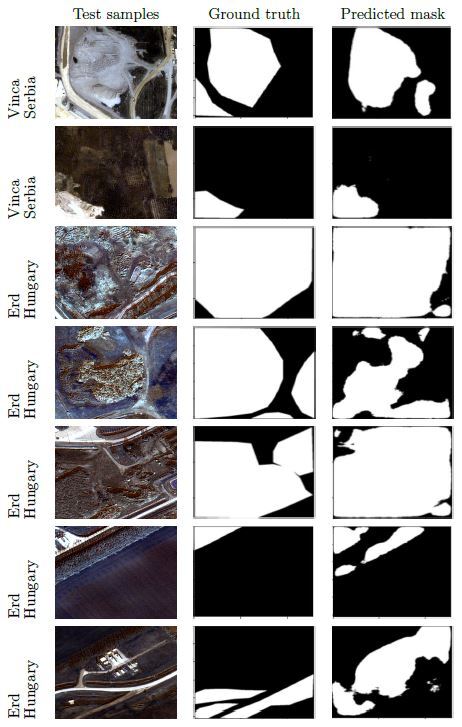

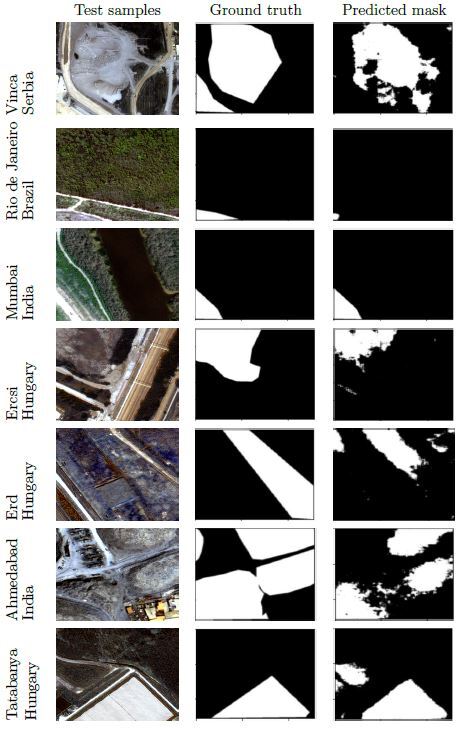

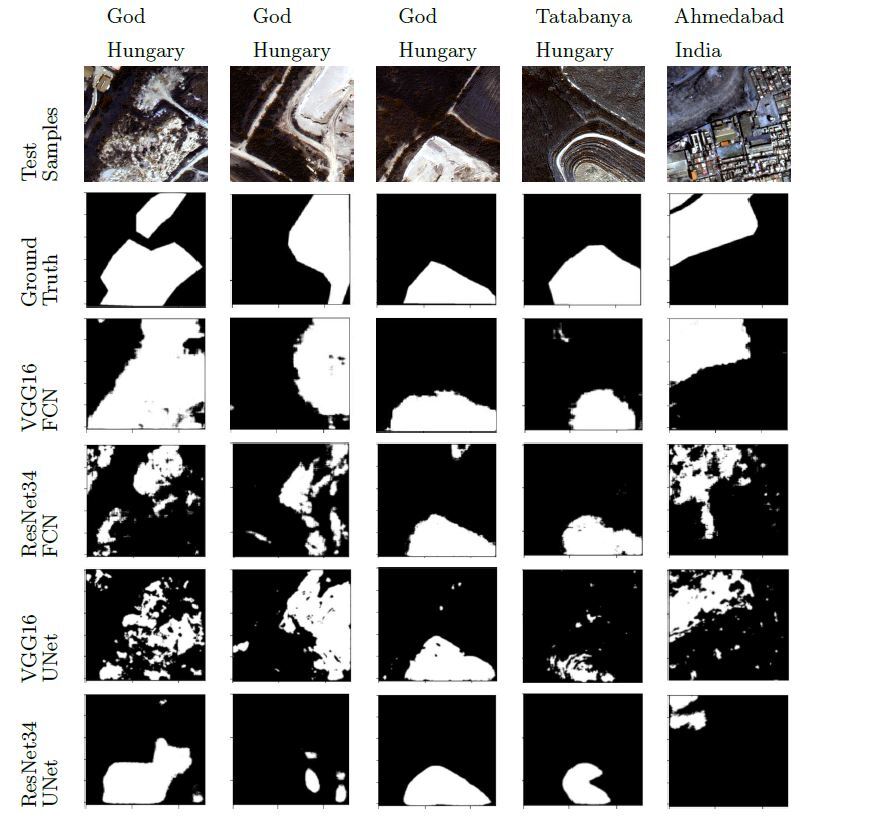

Comparison of segmentation results

ESA THIRD PARTY MISSION (TPM)

The satellite imagery used within this research project was obtained through the ESA Third Party Mission (TPM) Programme. This program provides free data, such as Very High Resolution satellite imagery from European Space Imaging, to users for the use within non-commercial research projects. Registered users can benefit from a large archive, with most of the data immediately accessible after successful registration. To learn more about this programme, or to become a registered user, please click here.

About the researcher

Anupama Rajkumar completed her MSc. in Autonomous Systems from Technische Universitaet, Berlin and Eotvos Lorand University, Budapest. The research in this project was carried out in collaboration with Hungarian Academy of Sciences (SZTAKI), Budapest. Her research interest lies in application of computer vision and machine learning in autonomous driving and earth observation.

She comes from India and before pursuing research in ML and CV, she worked as an embedded software engineer for several years at Bosch and John Deere in India.

LinkedIn: https://www.linkedin.com/in/anupama-rajkumar-910a222a/

Twitter: @AnupamaRajkumar

Related Stories

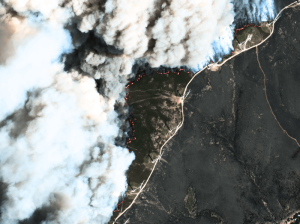

Maritime Domain Awareness in European Arctic Regions With VHR Satellite Intelligence

With the Arctic warming nearly four times faster than the average, the ice in the High North is melting and the sea is becoming increasingly navigable. In 2025 alone, 1812 vessels entered the Arctic Polar Code area, which is a 40% increase since 2013 when data collection began. While this rise in traffic presents potential commercial opportunities, more vessels also mean more risks to people and resources.

Seeing More in a Single Day: The Value of Intraday Satellite Collections

Imagine a convoy moves between 08:00 and 11:00. Or morning clouds clear by 14:00, revealing new activity. Or a structure appears at 09:30 that wasn’t visible at 07:00. If you’re working with once-daily satellite passes, you miss all of this. With intraday collections, you see it happen.

GEOSeries: Maintaining Temporal Control of Developing Situations With Rapid Satellite Tasking and Intraday Imaging

In the fast-moving operational environments of security monitoring and emergency response, the value of satellite data is defined by when it arrives, not just its resolution or accuracy. This webinar explores how Dynamic Tasking enables users to access actionable data fast and operate within mission decision windows.

VHR Satellite Images Show Damage After Niscemi Landslide

In January 2026, Italy declared state of emergency after being hit by Cyclone Harry – a storm that brought 10-metre waves and torrential rains of over 300 mm in 48 hours. The most severely affected regions were Sicily, Calabria and Sardinia, with the damage in Sicily alone estimated to be more than 1.5 billion euros. EUSI collected Very High Resolution satellite imagery of the affected areas, including Niscemi – a Sicilian town hit by a massive landslide.